Rebuilding the homelab: The Talos Principle

Published on , 2132 words, 8 minutes to read

Maybe there is light at the end of the tunnel.

This content is exclusive to my patrons. If you are not a patron, please don't be the reason I need to make a process more complicated than the honor system. This will be made public in the future, once the series is finished.

My homelab consists of a few machines running NixOS. I've been put into facts and circumstances beyond my control that make me need to reconsider my life choices. I'm going to be rebuilding my homelab and documenting the process in this series of posts.

Each of these posts will contain one small part of my journey so that I can keep track of what I've tried and you can follow along at home.

Why I homelab

One of the biggest bits of feedback I got on an earlier draft of part 1 was that they weren't sure why I was making a homelab in the first place. As in, what problem was I trying to solve by doing this?

I want to have a homelab so that I can have a place to "just run things". I want to be able to spin up and try out new things without having to worry about cloud provider cost or the burden of setting up new servers every time something new comes across my radar. I just wanna have a place to fuck around, find out, and write these posts for all you lovely people.

My suffering makes you people happy for some reason, so let's see how bad it can get!

The Talos Principle

Straton of Stageira once argued that the mythical construct Talos (an automaton that experienced qualia and had sapience) proved that there was nothing special about mankind. If a product of human engineering could have the same kind of qualia that people do, then realistically there is nothing special about people when compared to machines.

To say that Talos Linux is minimal is a massive understatement. It only has literally 12 binaries in it. I've been conceptualizing it as "what if gokrazy was production-worthy?".

Either way, my main introduction to it was last year at All Systems Go! by a fellow speaker. I'd been wanting to try something like this out for a while, but I haven't had a good excuse to sample those waters until now. It's really intriguing because of how damn minimal it is.

So I downloaded the arm64 ISO and set up a VM on my MacBook to fuck around with it. Here's a few of the things that I learned in the process:

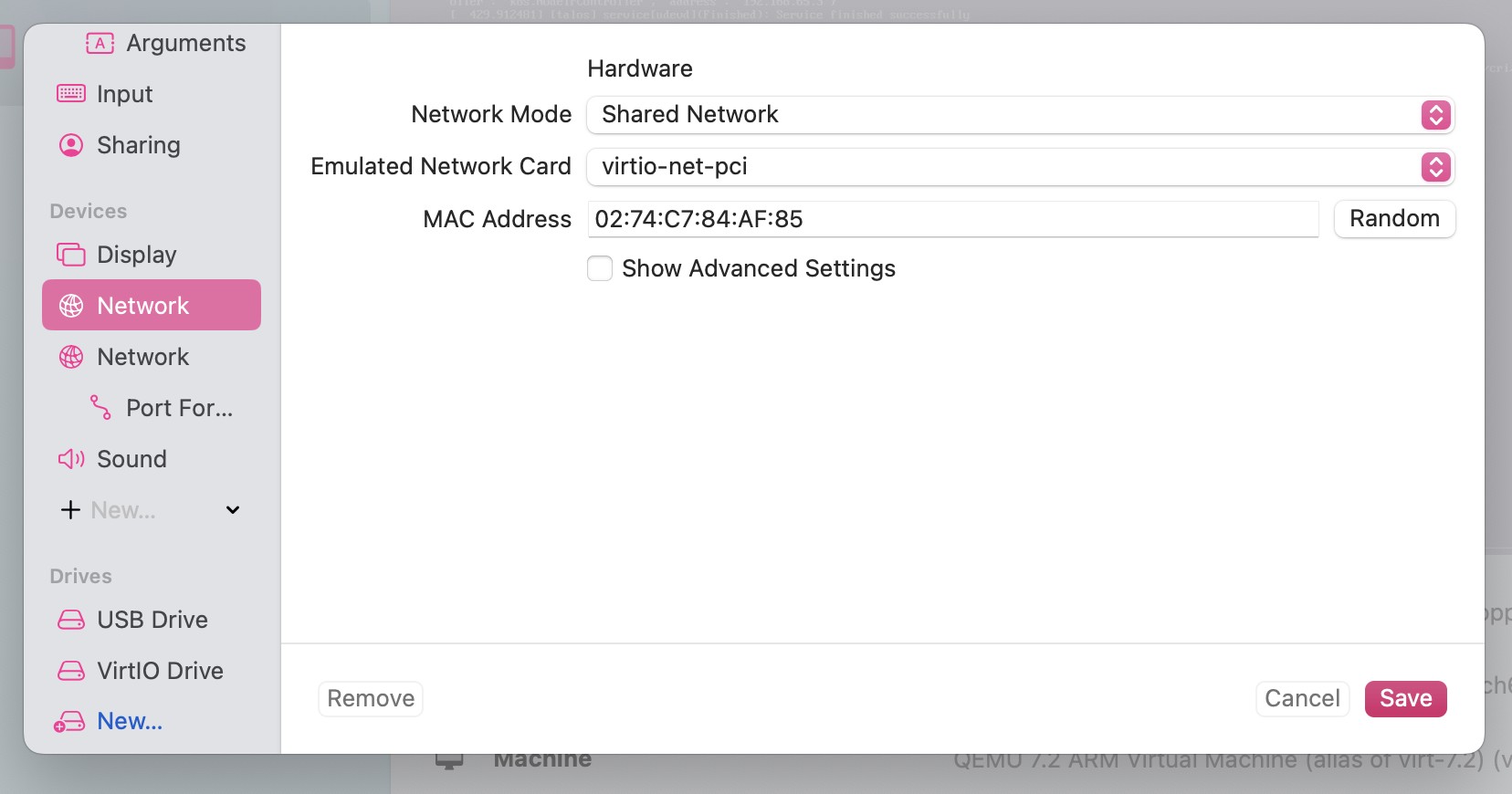

UTM has two modes it can run a VM in. One is "Apple Virtualization" mode that gives you theoretically higher performance at the cost of less options when it comes to networking. In order to connect the VM to a shared network (so you can poke it directly with talosctl commands), you need to create it without "Apple Virtualization" checked. This does mean you can't expose Rosetta to run amd64 binaries, but that's an acceptable tradeoff IMO.

Talos Linux is completely declarative for the base system and really just exists to make Kubernetes easier to run. One of my favorite parts has to be the way that you can combine different configuration snippets together into a composite machine config. Let's say you have a base "control plane config" in controlplane.yaml and some host-specific config in hosts/hostname.yaml. Your talosctl apply-config command would look like this:

talosctl apply-config -n kos-mos -f controlplane.yaml -p @patches/subnets.yaml -p @hosts/kos-mos.yaml

This allows your hosts/kos-mos.yaml file to look like this:

cluster:

apiServer:

certSANs:

- 100.110.6.17

machine:

network:

hostname: kos-mos

install:

disk: /dev/nvme0n1

which allows me to do generic settings cluster-wide and then specific settings for each host (just like I have with my Nix flakes repo). For example, I have a few homelab nodes with nvidia GPUs that I'd like to be able to run AI/large langle mangle tasks on. I can set up the base config to handle generic cases and then enable the GPU drivers only on the nodes that need them.

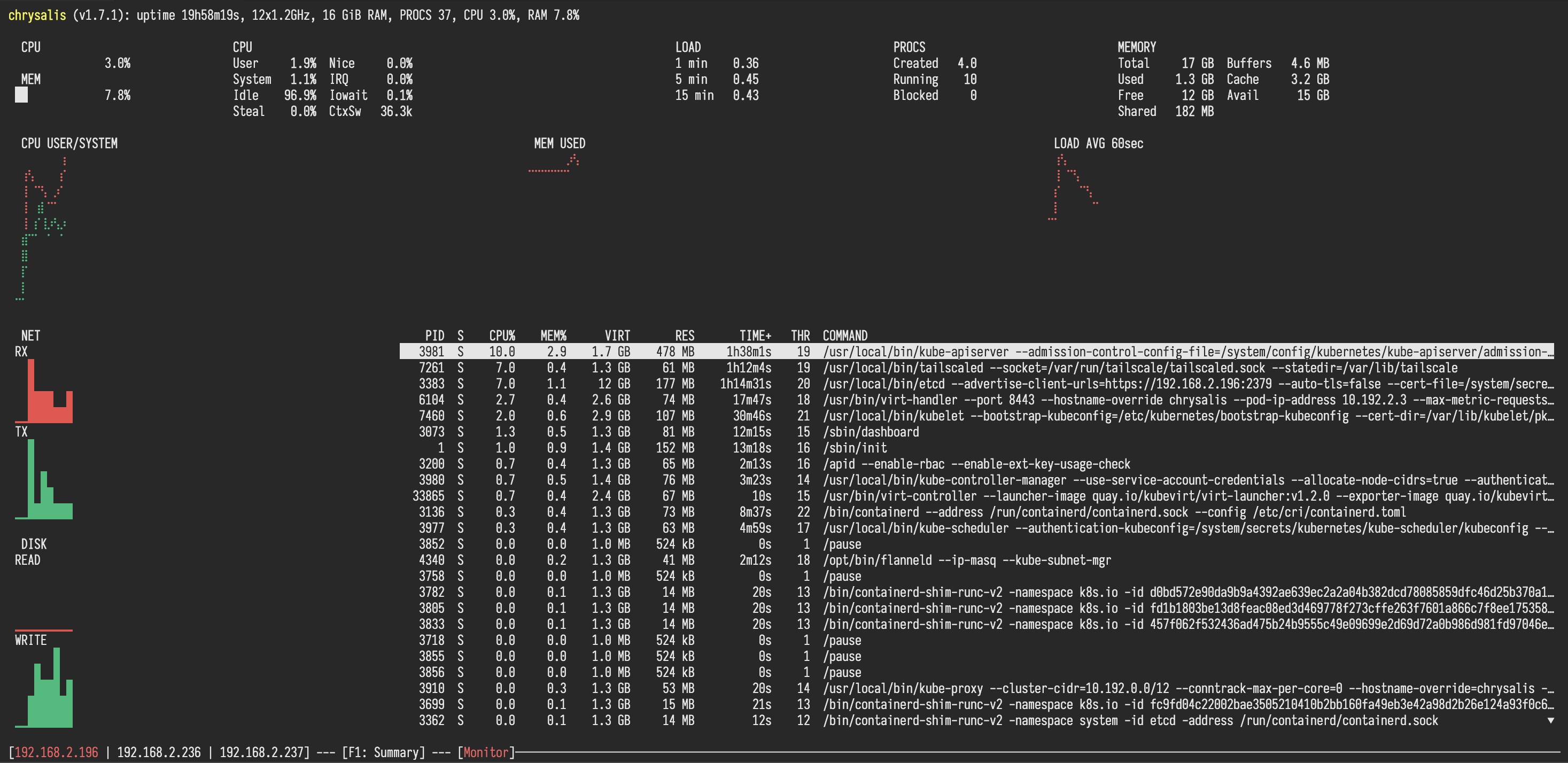

The Talosctl Dashboard

I just have to take a moment to gush about the talosctl dashboard command. It's a TUI interface that lets you see what your nodes are doing. When you boot a metal Talos Linux node, it opens the dashboard by default so you can watch the logs as the system wakes up and becomes active.

When you run it on your laptop, it's as good as if not better than having SSH access to the node. All the information you could want is right there at a glance and you can connect to mulitple machines at once. Just look at this:

Those three nodes can be swapped between by pressing the left and right arrow keys. It's the best kind of simple, the kind that you don't have to think about in order to use it. No documentation needed, just run the command and go on instinct. I love it.

Making myself a Kubernete

Talos Linux is built to do two things:

- Boot into Linux

- Run Kubernetes

That's it. So let's make a kubernete in my homelab!

I decided to start with kos-mos arbitrarily (mostly because I'd already evacuated its work to other homelab nodes). I downloaded the ISO, tried to use balenaEtcher to flash it to a USB drive and then windows decided that now was the perfect time to start interrupting me with bullshit related to Explorer desperately trying to find and mount USB drives.

I was unable to use balenaEtcher to write it, but then I found out that Rufus can write ISOs to USB drives in a way that doesn't rely on Windows to do the mounting or writing. That worked and I had kos-mos up and running in short order.

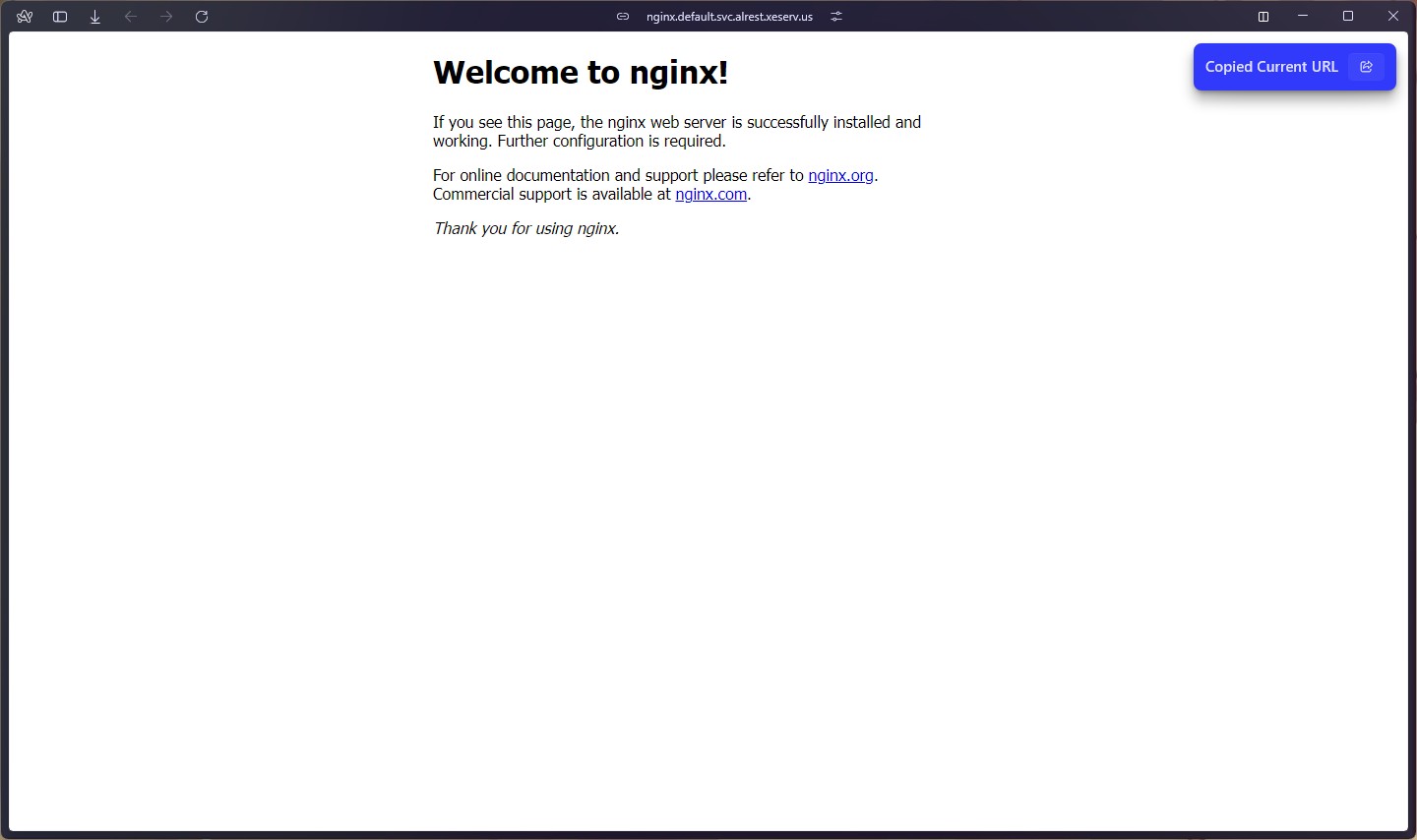

After bootstrapping etcd and exposing the subnet routes, I made an nginx deployment with a service as a "hello world" to ensure that things were working properly. Here's the configuration I used:

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

labels:

app.kubernetes.io/name: nginx

spec:

replicas: 3

selector:

matchLabels:

app.kubernetes.io/name: nginx

template:

metadata:

labels:

app.kubernetes.io/name: nginx

spec:

containers:

- name: nginx

image: nginx:1.14.2

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx

spec:

selector:

app.kubernetes.io/name: nginx

ports:

- protocol: TCP

port: 80

targetPort: 80

type: ClusterIP

Once this is up, you're golden. You can start deploying more things to your cluster and then they can talk to eachother. One of the first things I deployed was a Reddit/Discord bot that I maintain for a community I've been in for a long time. It's a simple stateless bot that only needs a single deployment to run. You can see its source code and deployment manifest here.

The only weird part here is that I needed to set up secrets for handling the bot's Discord webhook. I don't have a secret vault set up (looking onto setting up the 1password one out of convenience because I already use it at home), so I yolo-created the secret with kubectl create secret generic sapientwindex --from-literal=DISCORD_WEBHOOK_URL=https://discord.com/api/webhooks/1234567890/ABC123 and then mounted it into the pod as an environment variable. The relevant yaml snippet is under the bot container's env key:

env:

- name: DISCORD_WEBHOOK_URL

valueFrom:

secretKeyRef:

name: sapientwindex

key: DISCORD_WEBHOOK_URL

This is a little more verbose than I'd like, but I understand why it has to be this way. Kubernetes is the most generic tool you can make, as such it has to be able to adapt to any workflow you can imagine. Kubernetes manifests can't afford to make too many assumptions, so they simply elect not to as much as possible. As such, you need to spell out all your assumptions by hand.

I'll get this refined in the future with templates or whatever, but for now my favorite part about it is that it works.

The factory cluster must grow

After I got that working, I connected some other nodes and I've ended up with this:

$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

chrysalis Ready control-plane 20h v1.30.0

kos-mos Ready control-plane 20h v1.30.0

ontos Ready control-plane 20h v1.30.0

Later today I plan to get logos out of mothballs, back up the AI stuff it was doing to the NAS, and then shove it into the cluster. It has a spare SSD in it, so I'd be able to run some stateful workloads on it. I'm thinking of setting up OpenEBS so that I can get persistent volumes that survive between nodes.

Not to mention playing with kubevirt because that makes most of what I have made in waifud irrelevant. They handle VMs perfectly well for my usecases and I can build whatever waifud will become on top of that.

Facts and circumstances may have changed since publication. Please contact me before jumping to conclusions if something seems wrong or unclear.

Tags: homelab, talosLinux, k8s, kubernetes