Push notification two-factor auth considered harmful

Published on , 3751 words, 14 minutes to read

Sooooo Uber got popped. The hacker-internal rumor mill is churning out tons of scuttlebutt, but something came up that I've seen before and has made me adopt a radical new stance. I hope to explain it in this post for you all to learn from. Come along and join Cendyne and I on this magical journey!

tl;dr

Authentication is a hard problem for humans. It is even harder for computers. In general though, we have three methods or "factors" that we can use to do authentication:

- Something you are, like a retina or fingerprint scan

- Something you know, like a password

- Something you have, like a hardware two-factor auth token or authentication app that supports push notification based two-factor authentication

Most of the time you don't see retina and fingerprint scans required to log into Facebook. For a lot of people it's usually just that single password between anyone and their entire reputation being spoiled. There are efforts to try and get hardware authentication tokens into the hands of as many people as possible, but those are honestly a bad UX (user experience) compared to the "simplicity" of using a password. I doubt I'd be able to explain how to use a yubikey to my grandmother. Much less how to use a TOTP code generator.

I think that issuing everyone in the company a Yubikey and making every internal system work with that would be a better option. I think this because of the core problem of phishing: it works best when you are less vigilant. Many two factor authentication mechanisms lend themselves to phishing because of how they work. Here are my cynical thoughts about some common ones.

TOTP Codes

Many online services support TOTP code generation as a two-factor authentication mechanism. These work by sharing a secret value between the client and server. Both sides take this secret, put in the current time and generate a six digit code that way.

However, six digits is a very small space to search through when you are a computer. The biggest problem is going to be getting lucky, it's quite literally a one-in-a-million shot. Turns out you can brute force a TOTP code in about 2 hours if you are careful and the remote service doesn't have throttling or rate limiting of authentication attempts.

Oh not to mention, the way that TOTP codes work basically means that it's trivial for an attacker to create an identical flow to your authentication setup on a phishing site hosted on a bulletproof host and then sniff credentials that way. Once the phisher has your password and a TOTP code, they can just use it and redirect you to the actual login form, making you think it failed and you need to try again. The protocol practically enables phishing with relative ease. It's horrifying that this is the security best practice for Discord and Twitter users.

Yubikey Press

Yubikeys have multiple authentication methods built in. One of them is a legacy authentication method that makes the yubikey pretend to be a keyboard and type out a complicated long code based on a private key burned into the firmware of the device. This can also be phished. This was the main barrier between any employee and arbitrary user accounts at a past job. You got a corp issued yubikey and you had to use yubikey presses to get into secure areas of the admin panel.

This is also phishable, such as by shitposters on twitter.

So those are out of the question when it comes to protecting production access. All similar code based two-factor authentication methods suffer from the same phishablity problem.

Notification Fatigue As-A-Service

Another common way to do two factor authentication is to have a user sign into a mobile app. After that, when you sign in on your computer, it sends a push notification to your phone. Then you accept the authentication and you can go post your minecraft seeds to facebook marketplace or whatever.

Google and Facebook have these available as two-factor authentication methods. This means that a significant percentage of people on the planet likely use this authentication method. It is hilariously insecure in practice but makes people think that it is safe. I mean, you trust your phone, right?

Apple is another example, but instead of an app they hook into the desktop and mobile operating system with your iCloud account. Though, Apple requires a code to be manually relayed into the other device, which makes these a little harder to accidentally accept. Nothing a little phishing prep can’t get around.

Allegedly that's what the Uber hacker did. They spammed two factor auth notifications over and over for an hour while the poor victim was trying to sleep. You know, when your guard is down by instinct and you are more likely to act on instinct to just make the noise box shut up. Honestly as an information security professional I have to almost give that attack method credit, especially the part where they sent the person a message on WhatsApp pretending to be IT saying they need to approve the request to secure access to their account. It's ingenious and I'd probably fall for it.

Maybe we should consider this entire two factor authentication mechanism to be harmful towards reaching security goals. It looks impressive to executives, who are the ones that are usually making the decisions about the security products for some reason.

So you may be wondering something like:

I'm not really sure what the best solution is here, but I can suggest something that I think would help reduce harm: Webauthn.

WebAuthn

WebAuthn is a protocol for using hardware devices in

order to authenticate users by proof of ownership. The basic idea is that you

have a hardware security module of some kind (such as an iPhone’s Secure Enclave

or a Yubikey) that contains a private key, and then the server validates

signatures against the public key of the device to authenticate sessions. It is

also set up so that phishing attacks are impossible to pull off, each WebAuthn

registration is bound to the domain it was set up with. A keypair for

xeiaso.net cannot be used to authenticate with evil-xeiaso.net.

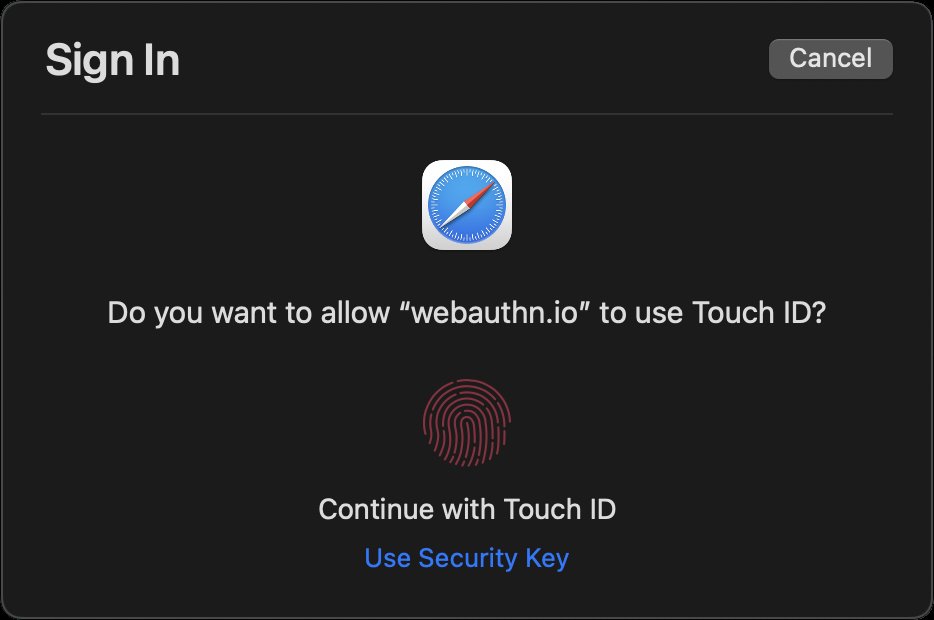

The user experience is fantastic. A website makes a request to the authentication API and then the browser spawns an authentication window in such a way that cannot be replicated with web technologies. The browser itself will ask you what authenticator you want to use (usually this lets you pick between an embedded hardware security module or a USB security key) and then proceed from there. It is impossible to phish this. Even if the visual styles were copied, the authenticator will do nothing to authenticate the browser!

Since the browser may not know which authenticator the user intends, it will prompt the user for the platform authenticator or for a roaming authenticator like a Yubikey. Platform authenticators combine biometrics like Face ID, Touch ID, or Windows Hello with the trusted platform module or secure enclave to authenticate. While roaming authenticators may require a presence test and optionally a PIN. This extra step also protects the user’s privacy from any drive-by client calls. When a biometric or PIN is verified, the server receives a flag that the user has been verified. Biometric and PIN data is not sent to the server. A standard yubikey without a PIN will appear as unverified.

The work continues on making WebAuthn easier and better to use. Apple recently released Passkeys with iOS 16 that will allow you to use your iPhone as a hardware authenticator for any other device, including iPads and Windows machines. This effectively turns your iPhone’s Secure Enclave into a roaming security key that you use on other machines, giving you the best of both worlds. You benefit from Apple’s industry-leading on-device security processors and also have the ability to use that on your other machines too. All the existing guarantees of WebAuthn are carried over, including the fact that each WebAuthn credential is bound to a single website. Today, you can choose "Add a new Android phone” in Chrome and scan it with your iOS device. Safari has not caught up yet, we expect this later.

In conclusion, push notifications for authentication should be considered harmful. You should not use them and you should prioritize moving towards hardware authentication tokens such as Yubikeys. It is worth the hardware and training cost to do this.

As a bonus, here's one of the ways that the web3 people get this kind of thing more right than wrong. They use the Ethereum cryptosystem as an authentication factor.

EIP-4361: Sign-In with Ethereum

WebAuthn is a protocol where you get a hardware element to sign a message to prove that you own the keypair in question, and that allows authentication to happen via the "something you own" flow. In Ethereum and other blockchain ecosystems, everyone has a keypair that signs messages for instructions like "transfer an NFT to another address" or "send this address some money". This is enough to let you construct an authentication factor. The strength of this authentication factor is...questionable, but by conforming to EIP-4361, you can turn an Ethereum keypair into an authentication factor for web applications. This will hook into a hardware wallet with WalletConnect or a software wallet with a browser extension like MetaMask. This works in a very similar way to how WebAuthn works, but with these core principles:

- Authentication is done by confirming that the user has access to a private key to sign a message that is validated with the public key, much like WebAuthn.

- Wallet apps show you the URL of the site you are trying to authenticate with and there is no way to easily forge that.

- Generating a new Ethereum keypair is free and anyone can do it without having to purchase hardware to act as key escrow.

But due to the implementation of all of this, it has the following weaknesses:

- There’s no protocol level way to tell what kind of secure element the user is using, if any. There are several different types of hardware "cold wallets" and one of the most commonly used strategies is to make a "hot wallet" that’s just a keypair managed by a browser extension such as MetaMask.

- In many web3 applications, the Ethereum keypair is the only authentication factor. This means if someone manages to get access to your keypair or recovery phrase somehow, they can steal your apes and you can’t get them back without trying to negotiate with the threat actor.

- Extracting the private key of an Ethereum address is considered a security feature and people are encouraged to write their private keys down on paper and store it somewhere safe. The first-time user experience of many Ethereum space things will force you to write down the recovery phrase by quizzing you on what it is and I can only imagine how many people are doing that with an actually secure mechanism. There are some interesting products available for doing things like punching your seed phrase into metal so you can put that metal object in a safety deposit box.

- This is implemented with browser extensions instead of properly embedded into the OS itself, meaning that it is theoretically possible to phish, but it looks like that is very difficult in practice.

Facts and circumstances may have changed since publication. Please contact me before jumping to conclusions if something seems wrong or unclear.

Tags: security, infosec, webauthn, web3, collab