Introducing Lokahi

Published on , 1353 words, 5 minutes to read

Lokahi is a http service uptime checking and notification service. Currently lokahi does very little. Given a URL and a webhook URL, lokahi runs checks every minute on that URL and ensures it's up. If the URL goes down or the health workers have trouble getting to the URL, the service is flagged as down and a webhook is sent out.

Stack

| What | Role |

|---|---|

| Postgres | Database |

| Go | Language |

| Twirp | API layer |

| Protobuf | Serialization |

| Nats | Message queue |

| Cobra | CLI |

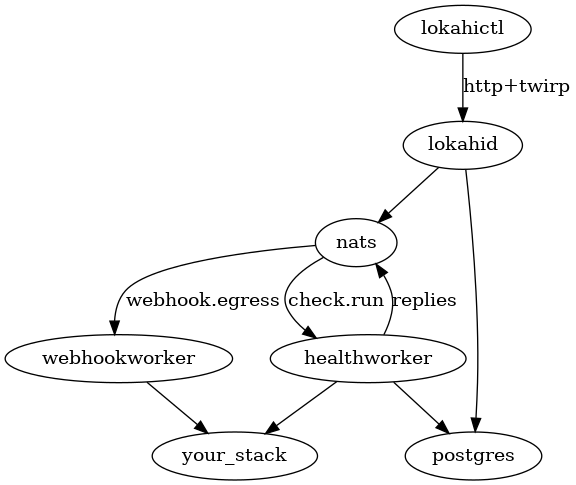

Components

Interrelation graph:

lokahictl

The command line interface, currently outputs everything in JSON. It currently has a few options:

$ ./bin/lokahictl

See https://github.com/Xe/lokahi for more information

Usage:

lokahictl [command]

Available Commands:

create creates a check

create_load creates a bunch of checks

delete deletes a check

get dumps information about a check

help Help about any command

list lists all checks that you have permission to access

put puts updates to a check

run runs a check

runstats gets performance information

Flags:

-h, --help help for lokahictl

--server string http url of the lokahid instance (default "http://AzureDiamond:hunter2@127.0.0.1:24253")

Use "lokahictl [command] --help" for more information about a command.

Each of these subcommands has help and most of them have additional flags.

lokahid

This is the main API server. It exposes twirp services defined in xe.github.lokahi

and xe.github.lokahi.admin.

It is configured using environment variables like so:

# Username and password to use for checking authentication

# http://bash.org/?244321

USERPASS=AzureDiamond:hunter2

# Postgres database URL in heroku-ish format

DATABASE_URL=postgres://postgres:hunter2@127.0.0.1:5432/postgres?sslmode=disable

# Nats queue URL

NATS_URL=nats://127.0.0.1:4222

# TCP port to listen on for HTTP traffic

PORT=9001

Every minute, lokahid will scan for every check that is set to run minutely and run them. Running checks any time but minutely is currently unsupported.

healthworker

healthworker listens on nats queue check.run and returns health information

about that service.

webhookworker

webhookworker listens on nats queue webhook.egress and sends webhooks based on

the input it's given.

Challenges Faced During Development

ORM Issues

Initially, I implemented this using gorm and started to run into a lot of problems when using it in anything but small scale circumstances. Gorm spun up way too many database connections (as many as a new one for every operation!) and quickly exhausted postgres' pool of client. connections.

I rewrote this to use database/sql and

sqlx and all of the tests passed

the first time I tried to run this, no joke.

Scaling to 50,000 Checks

This one was actually a lot harder than I thought it would be, and not for the reasons I thought it would be. One of the main things that I discovered when I was trying to scale this was that I was putting way too much load on the database way too quickly.

The solution to this was to use bundler to batch-write the most frequently written database items, see here. Even then, database connection count limiting was also needed in order to scale to the full 50,000 checks needed for this to exist as more than a proof of concept.

This service can handle 50,000 HTTP checks in a minute. The only part that gets backed up currently is webhook egress, but that is likely fixable with further optimization on the HTTP checking and webhook egress paths.

Basic Usage

To set up an instance of lokahi on a machine with Docker Compose installed, create a docker compose manifest with the following in it:

version: "3.1"

services:

# The postgres database where all lokahi data is stored.

db:

image: postgres:alpine

restart: always

environment:

POSTGRES_PASSWORD: hunter2

command: postgres -c max_connections=1000

# The message queue for lokahid and its workers.

nats:

image: nats:1.0.4

# The service that runs http healthchecks. This is its own service so it can

# be scaled independently.

healthworker:

image: xena/lokahi:latest

restart: always

depends_on:

- "db"

- "nats"

environment:

NATS_URL: nats://nats:4222

DATABASE_URL: postgres://postgres:hunter2@db:5432/postgres?sslmode=disable

command: healthworker

# The service that sends out webhooks in response to http healthchecks. This

# is also its own service so it can be scaled independently.

webhookworker:

image: xena/lokahi:latest

restart: always

depends_on:

- "db"

- "nats"

environment:

NATS_URL: nats://nats:4222

DATABASE_URL: postgres://postgres:hunter2@db:5432/postgres?sslmode=disable

command: webhookworker

# The main API server. This is what you port forward to.

lokahid:

image: xena/lokahi:latest

restart: always

depends_on:

- "db"

- "nats"

environment:

USERPASS: AzureDiamond:hunter2 # want ideas? https://strongpasswordgenerator.com/

NATS_URL: nats://nats:4222

DATABASE_URL: postgres://postgres:hunter2@db:5432/postgres?sslmode=disable

PORT: 24253

ports:

- 24253:24253

# This is a sample webhook server that prints information about incoming

# webhooks.

samplehook:

image: xena/lokahi:latest

restart: always

depends_on:

- "lokahid"

environment:

PORT: 9001

command: sample_hook

# Duke is a service that gets approximately 50% uptime by changing between up

# and down every minute. When it's up, it responds to every HTTP request with

# 200. When it's down, it responds to every HTTP request with 500.

duke:

image: xena/lokahi:latest

restart: always

depends_on:

- "samplehook"

environment:

PORT: 9001

command: duke-of-york

Start this with docker-compose up -d.

Configuration

Open ~/.lokahictl.hcl and enter in the following:

server = "http://AzureDiamond:hunter2@127.0.0.1:24253"

Save this and then lokahictl is now configured to work with the local copy of lokahi.

Creating a check

To create a check against duke reporting to samplehook:

$ lokahictl create \

--every 60 \

--webhook-url http://samplehook:9001/twirp/github.xe.lokahi.Webhook/Handle \

--url http://duke:9001 \

--playbook-url https://github.com/Xe/lokahi/wiki/duke-of-york-Playbook

{

"id": "a5c7179a-0d3a-11e8-b53d-8faa88cfa70c",

"url": "http://duke:9001",

"webhook_url": "http://samplehook:9001/twirp/github.xe.lokahi.Webhook/Handle",

"every": 60,

"playbook_url": "https://github.com/Xe/lokahi/wiki/duke-of-york-Playbook"

}

Now attach to samplehook's logs and wait for it:

$ docker-compose -f samplehook

2018/02/09 06:27:15 check id: a5c7179a-0d3a-11e8-b53d-8faa88cfa70c,

state: DOWN, latency: 2.265561ms, status code: 500,

playbook url: https://github.com/Xe/lokahi/wiki/duke-of-york-Playbook

Webhooks

Webhooks get a HTTP POST of a protobuf-encoded xe.github.lokahi.CheckStatus

with the following additional HTTP headers:

| Key | Value |

|---|---|

Accept |

application/protobuf |

Content-Type |

application/protobuf |

User-Agent |

lokahi/dev (+https://github.com/Xe/lokahi) |

Webhook server implementations should probably store check ID's in a database of some kind and trigger additional logic, such as Pagerduty API calls or similar things. The lokahi standard distribution includes Discord and Slack webhook receivers.

JSON webhook support is not currently implemented, but is being tracked at this github issue.

Call for Contributions

Lokahi is pretty great as it is, but to be even better lokahi needs a bunch of work, experience reports and people willing to contribute to the project.

If making a better HTTP uptime service sounds like something you want to do with your free time, please get involved! Ask questions, fix issues, help newcomers and help us all work together to make the best HTTP uptime service we can.

Social media links for discussion on this article:

Mastodon: https://mst3k.interlinked.me/@cadey/99494112049682603

Reddit: https://www.reddit.com/r/golang/comments/7wbr4o/introducting_lokahi_http_healthchecking_service/

Hacker News: https://news.ycombinator.com/item?id=16338465

Facts and circumstances may have changed since publication. Please contact me before jumping to conclusions if something seems wrong or unclear.

Tags: hackweek, release, go, monitoring